Wherein Mike Warot describes a novel approach to computing which follows George Gilders call to waste transistors.

Thursday, October 28, 2010

Applications - image processing, survey plane

I've been doing a lot of experimenting with synthetic focus imagery. Having recently written a tool to help me do image matching, I've begun to appreciate why Hugin gets so bogged down generating and matching control points. The cross correlation of 2d image sets is a huge resource hog. Fortunately, the bitgrid should be quite capable of handling it, because the computations are data local, with the only global data being the source material which loads once per frame, and the output maximum coordinates, again once per frame.

I imagine a remote control glider with a pair (latter an array) of cameras feeding into a system which correlates the images to generate altitude data. It should be possible once this 2d triangulation is done, with hints from the navigation system, to then generate a 3d image of the area below, with altitude information at least as accurate as the pixel resolution allows.

If the plane is slow and stable enough to allow multiple overlapping images of the same area, it should be possible to derive super-resolution images using Richardson-Lucy deconvolution.

It all hinges on the question of power consumption of a single bitgrid cell. Something I don't know, but an experienced IC designer should be able to figure out on his lunch break.

Saturday, August 14, 2010

Spreadsheet iteration and other linguistic hits

I'm still trying to figure out the cost/benefit ratio of getting rid of all routing in an a real world FPGA device. As an abstraction tool, it's totally cool and cost effective, as there are no static or dynamic power costs in a thought experiment. 8)

As an intermediate stage of compiling a design, there are time costs in translations, but they might be worth it when it comes to the ability to move elements of a system design orthogonal to other design decisions.

Time and persistent effort to get the questions answered will tell. I'm glad I'm still asking questions and pursuing the goal of getting a BitGrid chip built

Oh... another linguistic hit "hardware spreadsheet" as mentioned here.

Tuesday, July 27, 2010

Reconfigurable Systolic Array

I get a lot of results, mostly academic (which means they are behind a paywall, and thus worthless). It does give me a better way to describe the bitgrid, though.

The bitgrid is a fine grained homogeneous 2d reconfigurable systolic array and/or mesh. It will be verified as to utility by simulation. I hope to popularize it with blogs, social media, and making a game out of it.

It is my belief that the flexibility of the LUT based approach more than makes up for the lack of dedicated routing and compute blocks. Any inactive elements of the circuit are unclocked, and thus should be at very low power.

I'm not sure if I'm going to be a good fit for the OHPC project or not, I've got until August 6 to write a proposal.

Friday, July 09, 2010

Prior art - non found... and I've got a headache

I can't find anything that is close to the bitgrid.

I'm going to try to relax, and wait for the Excedrin to kick in.

Tuesday, July 06, 2010

Learning about chip design

I've got a month until the first DARPA deadline for submissions...

wish me luck.

Sunday, July 04, 2010

What's required for Exascale

And in another opinion piece over at International Science Grid This Week, Irving Wladawsky-Berger, a 37-year IBM veteran, lays out some of the big challenges on the way to achieving exascale computing. Whereas the evolution from terascale to petascale went smoothly using tens to hundreds of thousands of processors from the PC and Unix markets, they will not get us to exascale, writes Wladawsky-Berger. Exascale will require some other kind of major transition in chip architecture, not to mention a completely new programming paradigm.

Thursday, July 01, 2010

SimGrid - Up and running

Wednesday, June 30, 2010

Unrolling programs instead of loops

Monday, June 28, 2010

Introducing Sim02

Thursday, June 24, 2010

Introducing Bitgrid-Sim version 0.03

The simulation shown is a pass through.... in this configuration the bitgrid cell acts as an expensive set of wires, passing signals straight through. It's useful for filler between active cells.

In this simulator you can edit the program (showing in the 4 areas of check boxes) and simulate inputs The checkboxes corresponding to the current set of inputs are highlighted red as a programming aid. You can save your work as well.

Users can load a few examples, or create there own. In addition to creating a Google Code project site for it, I've also packaged up the Source, Executable and Examples and made it available as a zip file. It's small, and you just unzip it, and it should be ready to use.

Wednesday, June 23, 2010

Beyond the Petaflop - How Bitgrid could meet DARPA's needs ahead of schedule.

I think it could be done in 3 years if it didn't have to fit in one rack, it could have already been done, had there not been such a heavy emphasis on the Von Neuman architecture for the past 40 years.

Imagine a bit slice processor....with perhaps 1000 transistors. Put those in the same die in a 1000x1000 grid, this would require 10^9 transistors. You could clock them at the nice sane clock speed of 1 ghz. That would fit in a die the same size as a current generation cpu. That's 10^6 slices times 10^9 cycles/second, or 10^15 bit computes per second, on a practical size die, with current technology.

Even if you lost 99.9% of the compute efficiency in shuffling bits around to do a floating point operation, you could still do 10^12 Floating point operations per second, on a prototype chip... today.

The chip would be easy to test, because all of the bit slices would be identical, so the testing of each part could be done in parallel... perhaps 1 second to test time per die. (Testing is a big part of cost when it comes to chips) The chip would cost somewhere around $10 each.

If you allow me to continue with my estimate of 10^12 flops per chip, and it were possible to build a grid of 1000x1000 of them... that takes you to the magic 10^18 Flops that DARPA wants, for a cost of about $10,000,000.

10^18 operations per second, with 10^15 transistors, clocked at 1Ghz. Feasible... yes... but it does require you to give up sequential programming, and think in terms of graph flow.

It's called bitgrid, I thought of it around 1981.... and I've written some of this up at http://bitgrid.blogspot.com

Wednesday, April 07, 2010

Enabling technology on its way from HP

Thursday, July 31, 2008

HRSA - Close, but no cigar

Hierarchical Synchronous Reconfigurable Array which is part of the BRASS Project University of California at Berkeley via a google search.

They figured out that long interconnects are a problem when you're trying to get speed out of a logic array, but don't seem to be willing to give up the big complex interconnection logic.

I'd call this a step closer to the bit grid, but definitely not a hit.

Tuesday, July 29, 2008

Instruction Systolic Array

The idea is intriguing because it offers a way to get the benefits of the systolic array without having to have all of the bandwidth necessary to update all of the cells at once. The web site is well thought out and informative as well. I like the illustration of the matrix multiply using their concept.

There are a lot of architectures that got skipped along the way to our current crop of FPGA and other programmable logic circuits. I think that the systolic array warrants further consideration as well as the BitGrid.

Friday, July 18, 2008

BitGrid - A minimalist systolic array

systolic array

The bitgrid as I imagined it way back in the 1980s is a systolic array. It takes information, and processes pieces of it simultaneously. It's an extension of the then-common idea of a bit-slice processor, which was used to create really fast custom processors before the microprocessor really took off.

The BitGrid is a minimalist bidirectional systolic array. According to the wikipedia entry on the subject, the pros and cons of systolic arrays are:

Pros

- Faster

- Scalable

Cons

- Expensive

- They are a highly specialized for particular applications.

- Difficult to build

The fact that I want to process 4 bits at a time means that each cell is almost trivial, a 4bit wide 4address line EEPROM table, for a total of 64 bits of information. This makes it cheap and easy to design and build, pretty much wiping out the Cons in the table above. I don't have a way to get silicon, yet but I expect it should be the matter of getting a cell and it's addressing logic right, then replicating a big grid of these onto a single chip.

I've figured out that a n bit multiplier requires n*(n-1) cells. A divider takes the same number of cells. Adding and subtracting n bits requires n cells.

It's amazing how little of this can be found via a straightforward Google search, unless you know exactly which magic words to use. Semantic web searches will add value, should they ever actually get here.

Sunday, October 15, 2006

Logisim

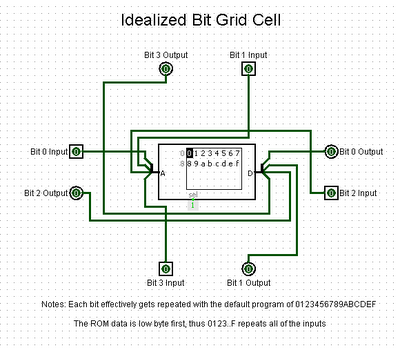

Earlier this week I foundCarl Burch's wonderful Logisim which does digital logic simulation. It took a few hours of tweaking, and one flash of insight (below)... but here is what a single BitGrid cell looks like in an idealized format.

The cell consists of a single 16x4 RAM cell (4 bits address, 4 bits data). I used a ROM in the simulation to allow it to persist across saves, and simplify the layout.

There are any number of ways you could wire this thing up... the flash of insight I had was that I wanted it to be very simple to turn a cell into a simple pass-through repeater. I figured that if addresses 0-F were programmed with contents 0-F, and it just worked that way... it would be easiest to understand. This leads naturally to the layout you see pictured here.

If you want to see for yourself, here is the Logisim Circuit file. (You'll have to save it, then rename it to *.circ for Logisim due to limitations of my web host at 1and1.com)

I welcome comments and suggestions.

--Mike--

Friday, February 24, 2006

Signs of life

It's not dead yet! 8)

Thursday, March 17, 2005

BitGrid in 25 words or less

concatenated 4 bit number becomes index

One lookup table per neighbor

Wednesday, March 16, 2005

Bitgrid pro and con - AKA the Thesis

If the Bitgrid is such a great idea, why haven’t I heard of it before?

One word answer: “Efficiency”

My review of the current literature, aided by my trusty pal Google has shown that the past 25 years of programmable/custom logic design is focused on serving one God, efficiency. All of the designs I’ve seen (admittedly a small subset because I’m not a professional circuit engineer) optimize on some or all of these common goals:

- Speed

- Power

- Size

- Circuit complexity

- Unit cost

They do this for a very good set of reasons. You want the lowest power dissipation because it makes it easier to feed and clean up after. You want the fastest speed because that is the driving factor for using hardware instead of software. You want the smallest design size so that you use less die area, and have less chance of a losing a chip to a defect. The circuit complexity goal drives a huge investment in design tools to automate design tasks as much as possible.

The things that are often traded away for these goals in a chip design are:

- Flexibility

- Fault tolerance

- Engineering costs

The primary reason for going with hardware in the first place is usually speed. If speed is not an issue, then it is usually a good idea to do a given task in software. Software is infinitely malleable, and far easier to patch and update.

Fault tolerance is usually excluded from designs of custom chips because it is difficult to achieve, and is better addressed by testing and quality control measures. Only when a given feature of a design is homogenous such as in RAM or ROM, is the option to include spares included in a design.

Engineering costs are usually considered last in a mass produced chip, but they are never trivial. The processes are optimized to automate as much of the design work as possible away from human engineers, but there are always going to be complexity limitations imposed by the heterogeneity of the elements in a given Programmable Logic / Custom ASIC design.

When viewed from the perspective of the design community I’ve observed, it becomes obvious why nobody has built a bitgrid yet… it’s inefficient as hell by their criteria. An insider would never seriously consider a bitgrid design.

That, gentle reader, is why you haven’t heard of the bitgrid before.

Reasons to consider the bitgrid

One word answer: “Efficiency”

Only in this case, a different set of parameters to optimize on:

- Flexibility

- Fault tolerance

- Testing time

- End users

The bitgrid is based on a single basic component, with a known, easily comprehended design, in an orthogonal grid. The homogenous nature of the grid makes it trivial to relocate a given portion of an application program.

It can route around a bad cell, if one is found. Each and every cell is a programmable wire at minimum utilization. As long as extensive faults are not present, and slack is available, it should be feasible to route around bad cells in almost any design.

Because it’s possible to test the RAM that stores the programming along with the bitgrid cells one at a time, it should be very easy to quickly and confidently test a chip with a minimum of testing equipment complexity.

Because of the simple nature of the bitgrid, a set of graphical tools for design and debugging can be built and will be applicable to any implementation of the chip. The parameters of the IO pins and array size are the main constraints to give to the tools. There are no design heterogeneity obstacles to complicate tool development.

There are, of course some bit trade offs made, including:

- Speed

- Latency

- Power

- Die Size

- Unit cost

Compared to an ASIC, a bitgrid will always be slower, use more power, consume a larger die space and cost more per unit. A given bit will have to traverse at least 4 gates per cell, just to emulate a wire. The end user, will be compelled to expend some time optimizing their programming to fit in the smallest available bitgrid device applicable, of course.

Summary

I’m confident that as Moore’s law continues to drive down transistor prices, the bitgrid will become seen as a viable computing architecture for select applications, and may possibly mature into general use over time. The closest analogy I can evoke at this time is to the debates that were made with the introduction of high-level computer languages. It occurred when the cost of computation was driven below the cost of the programmers. I believe this transition is on its way for silicon.

I believe the best way to predict the future is to invent it. I hope you like my invention.

--Mike—

March 16, 2005

Friday, March 11, 2005

Why we need a Free Hardware Foundation

Specifically, if my bitgrid idea actually pans out, I'd like to let anyone use it in a design according to an equivalent of the GPL for hardware. They would then be bound to do the same for their designs, so that improvements can get worked into the technology, and we all benefit.

The main threat I want to hold off is that of submarine patents. If we can build an open database of ideas which can be shared by all, it could be a very powerful tool. I'm not a lawyer, so I don't know if patent or copyright law would be the best place to try to build a GPL equivalent, but I'm sure its time to figure it out.

[update]

An analogy made in this post by Eric Ste-Marie in 1999:

On the other hand, the day that we can have a processor definition from the internet, download that definition in a "processor makng machine", add whatever material is needed, press a button, wait 5 minutes and "DING!" your processor is ready; well this day, maybe free hardware foundation will become popular and worth the effort. Until that time it will be a hobbyist thing and won't have any impact on the computer world compared to Free software.

Perhaps the bitgrid could fill that role by being virtual hardware?

About Me

- Mike Warot

- I fix things, I take pictures, I write, and marvel at the joy of life. I'm trying to leave the world in better condition than when I found it.